zfs failed array recovery

2015-07-10

I have had a nice ZFS RAIDZ in operation for the last 7 years now, 4 1TB Drives.

The 2.6TB or overall storage I had was very sufficient, and while the drives were starting to show their age and performing less and less, I had no reason to replace them yet. After all, I don’t need MORE storage.

Then one evening, my desktop failed to mount a mapped drive. Specifically to the storage path. I found it odd, because my home directory was working

There is a feeling of dread that you get when you realize you had not recently performed a full backup… I checked the TV, which happens to have my server connected to the VGA port, and I saw a LOT of interface errors writing to disk. What was worse, is that TWO our of four drives had failed.

So… about those backups…

Well, thankfully I had purchased enough Google Drive storage earlier on in the year, and I had both my Music and my family photo’s synchronized.

What I did not have backed up anywhere was:

- Family movies copied off of my In-Laws camera

- Movies I had ripped from my physical collection (no big deal…)

- eBooks, lots of them

I was coming to terms with the data-loss. I did feel horrible, just awful, about the family videos though.

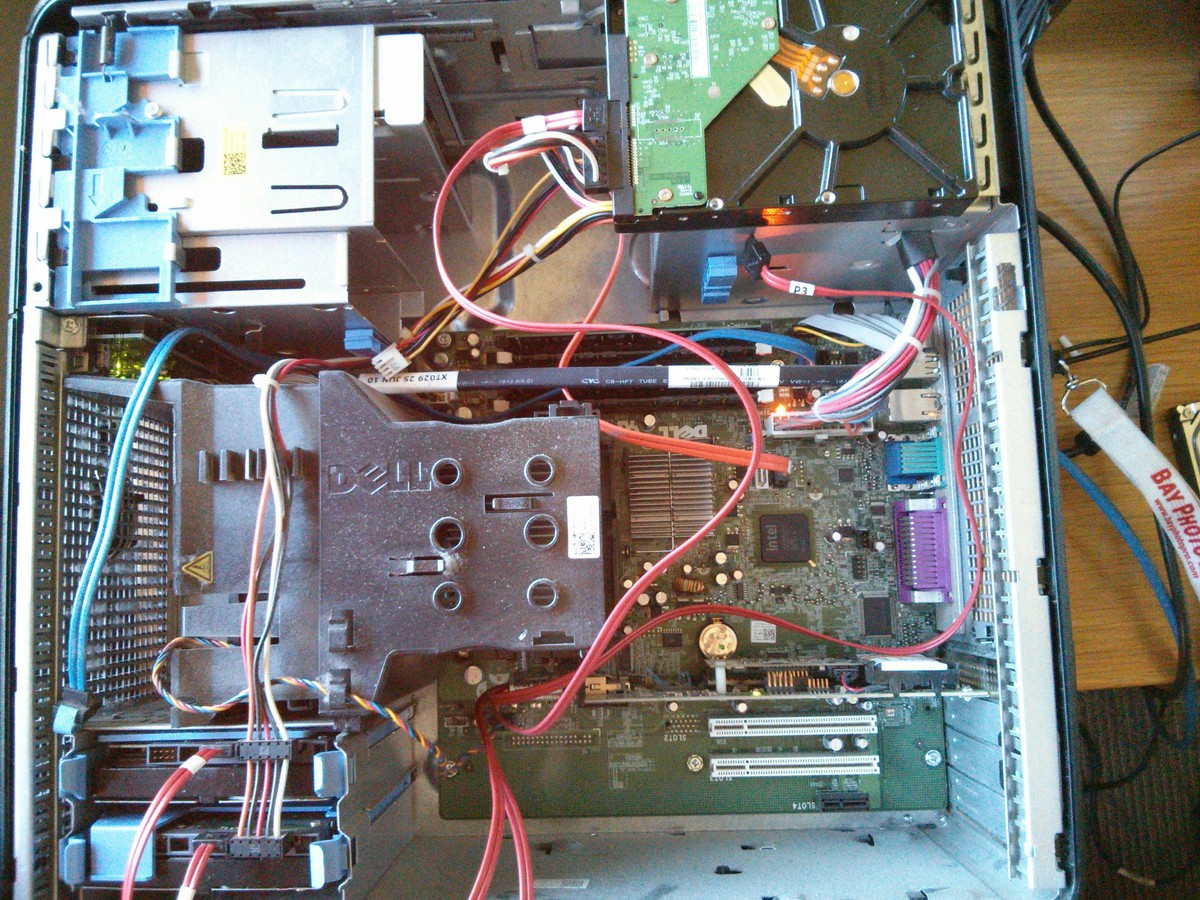

My server at home is in really cramped quarters, and I did not want to have to work in that kind of environment. So, I took the drives into the office and just let them sit there for a few weeks. Eventually, I had a free lunch that I was able to take some time and work on them.

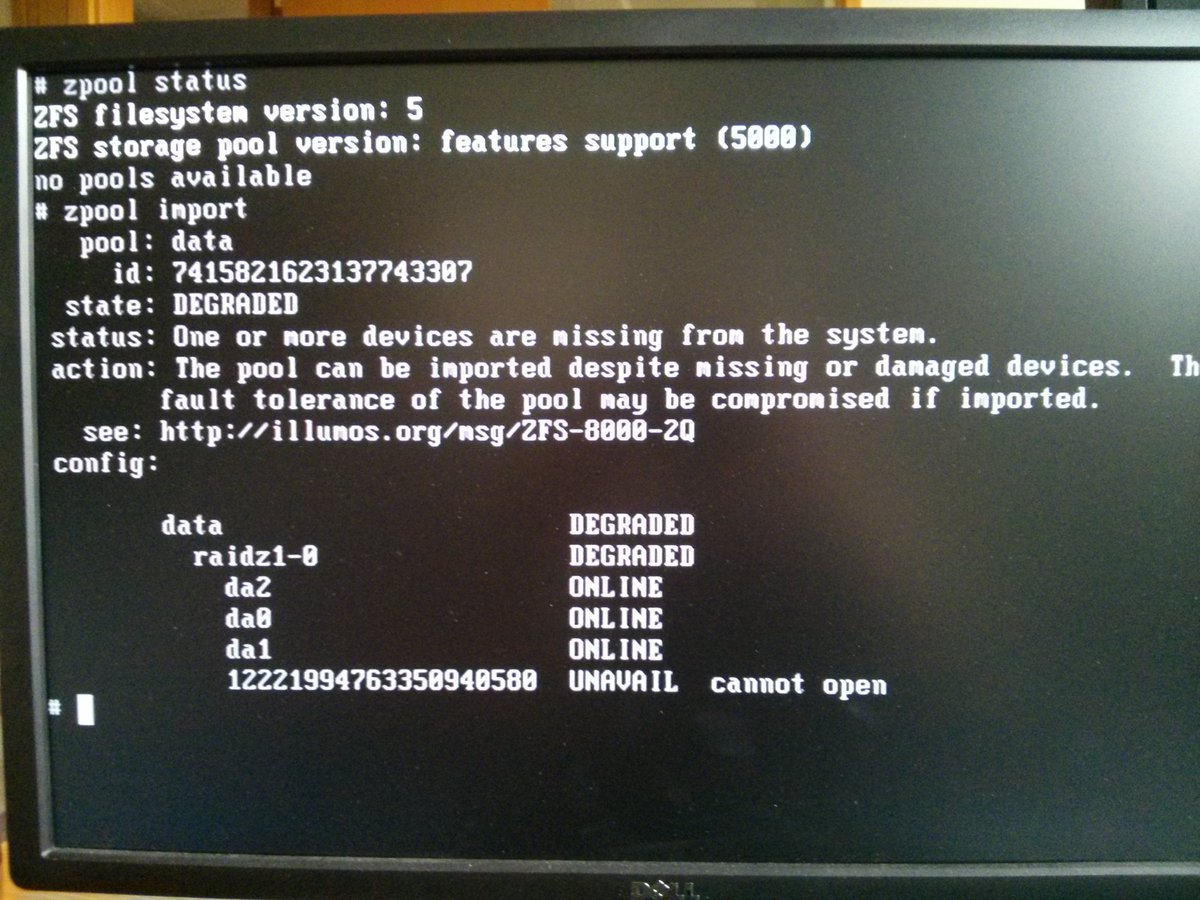

I installed all 4 drives into a spare system, and as expected, the raidz was in a failed state.

I noticed one drive that had failed earlier was powering up, but its content was too state to allow the array to be in a degraded state.

The other drive, the one that recently failed, was not spinning up.

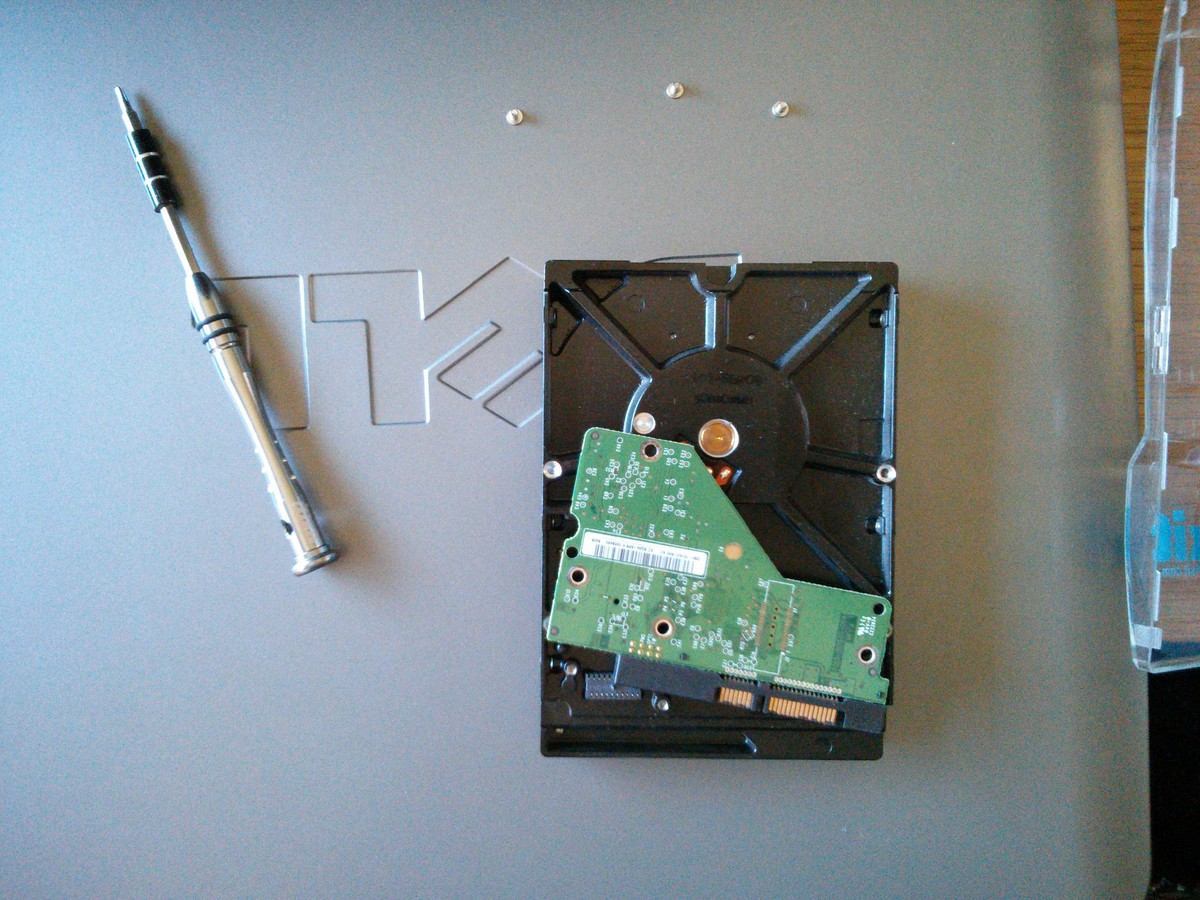

The next logical step, was to cannibalize the drive that was functional, but too out of date, with the one that failed recently and did not power. I ended up swapping the controller board, and that did the trick. The drive powered up!

Here goes nothing…

todo

todo

todo

todo

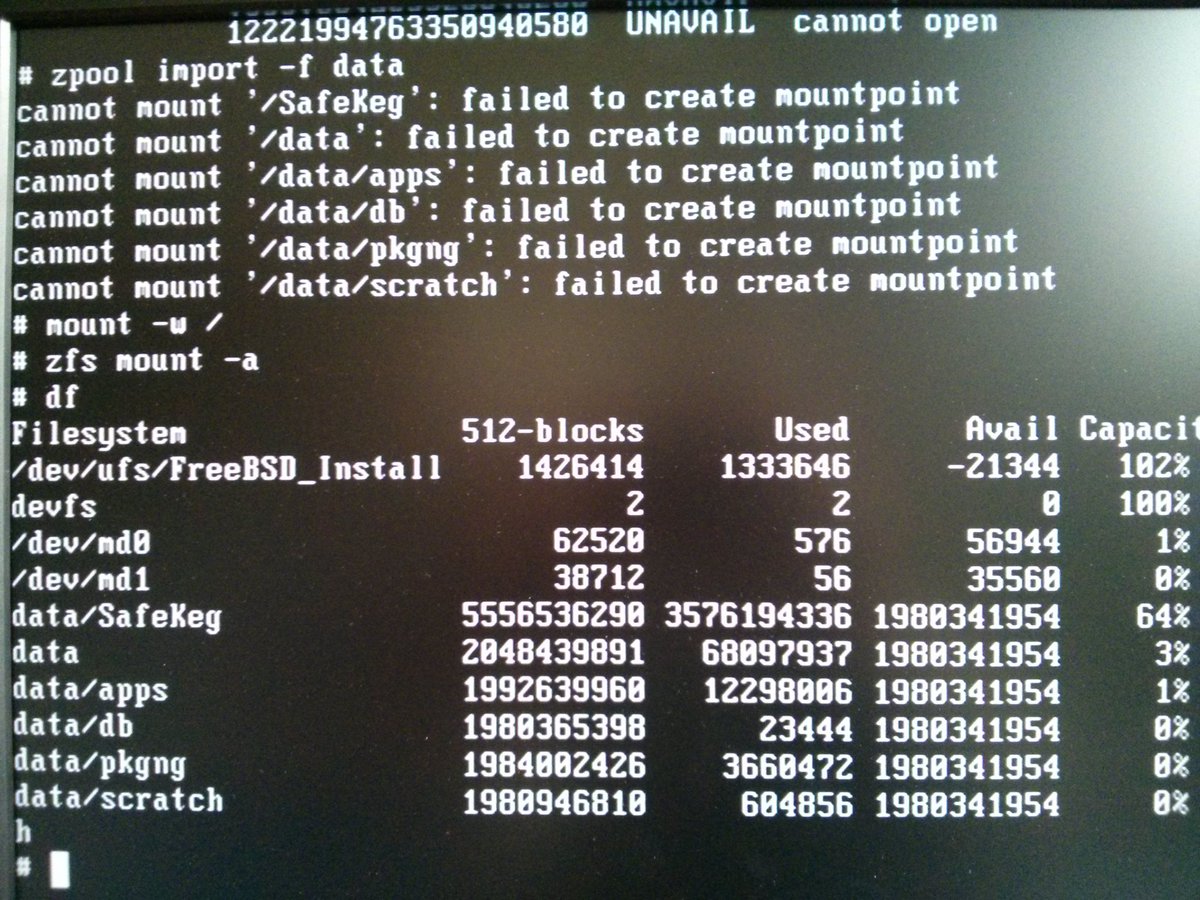

Now, it wasn’t perfect, the drive would start clicking and eventually freeze the filesystem with i/o timeouts. I was able to get the really important data off, and then I started copying off items that were less critical. All of the irreplaceable data was copied off.

A zfs send | recv was impossible, I had to use “scp -r” to copied directories at a time

Some lessons are to be learned. First, I should do what I do at work, and that is have a Icinga alert for a failed zpool. It’s not like I don’t know how to do these things.

Second, get some new drives! 7 years is a really long time for green WD drives, especially when I leave them on 24/7